Spatial Experience in Humans and Machines

PhD Dissertation. Massachusetts Institute of Tecnology. 2020

Spatial experience is the process by which we locate ourselves within our environment, and understand and interact with it. Understanding spatial experience has been a major endeavor within the social sciences, the arts, and architecture throughout history, giving rise to recent theories of embodied and enacted cognition. Understanding spatial experience has also been a pursuit of computer science. However, despite substantial advances in artificial intelligence and computer vision, there has yet to be a computational model of human spatial experience. What are the computations involved in human spatial experience? Can we develop machines that can describe and represent spatial experience?

In this dissertation, I take a step towards developing a computational account of human spatial experience and outline the steps for developing machine spatial experience. Building on the core idea that we humans construct stories to understand the environment and communicate with each other, I argue that spatial experience is a type of story we tell ourselves, driven by what we perceive and how we act within the environment. Through two initial case studies, I investigate the relationships between stories and spatial experience and introduce the anchoring framework —a computational model of constructing stories using emergent spatial, temporal, and visual relationships in perception. I evaluate this framework by performing a visual exploration study and analyzing how people verbally describe environments. Finally, I implement the anchoring framework for creating spatial experiences by machines. I introduce three examples, which demonstrate that machines can solve visuo-spatial problems by constructing stories from visual perception using the anchoring framework. This dissertation contributes to the fields of design, media studies, and artificial intelligence by advancing our understanding of human spatial experience from a story perspective; providing a set of tools and methods for creating and analyzing spatial experiences; and introducing systems that can understand the physical environment and solve spatial problems by constructing stories.

the anchoring hypothesis

Language is primarily an instrument of thought, so are stories. I conjecture that the spatial aspects of our stories, which convey spatial and temporal relations among objects, events, and environments, are drawn from our spatial experiences. Just as we are able to verbally express these relations in our external stories, there must be cognitive mechanisms that allow us to extract these relations from our perceptions and actions and construct inner stories. These intuitions motivate what I call the anchoring hypothesis, which states that spatial experiences are inner stories that chain together perceptions and actions by making evident the spatial and temporal relations among them through the use of symbolic expressions.

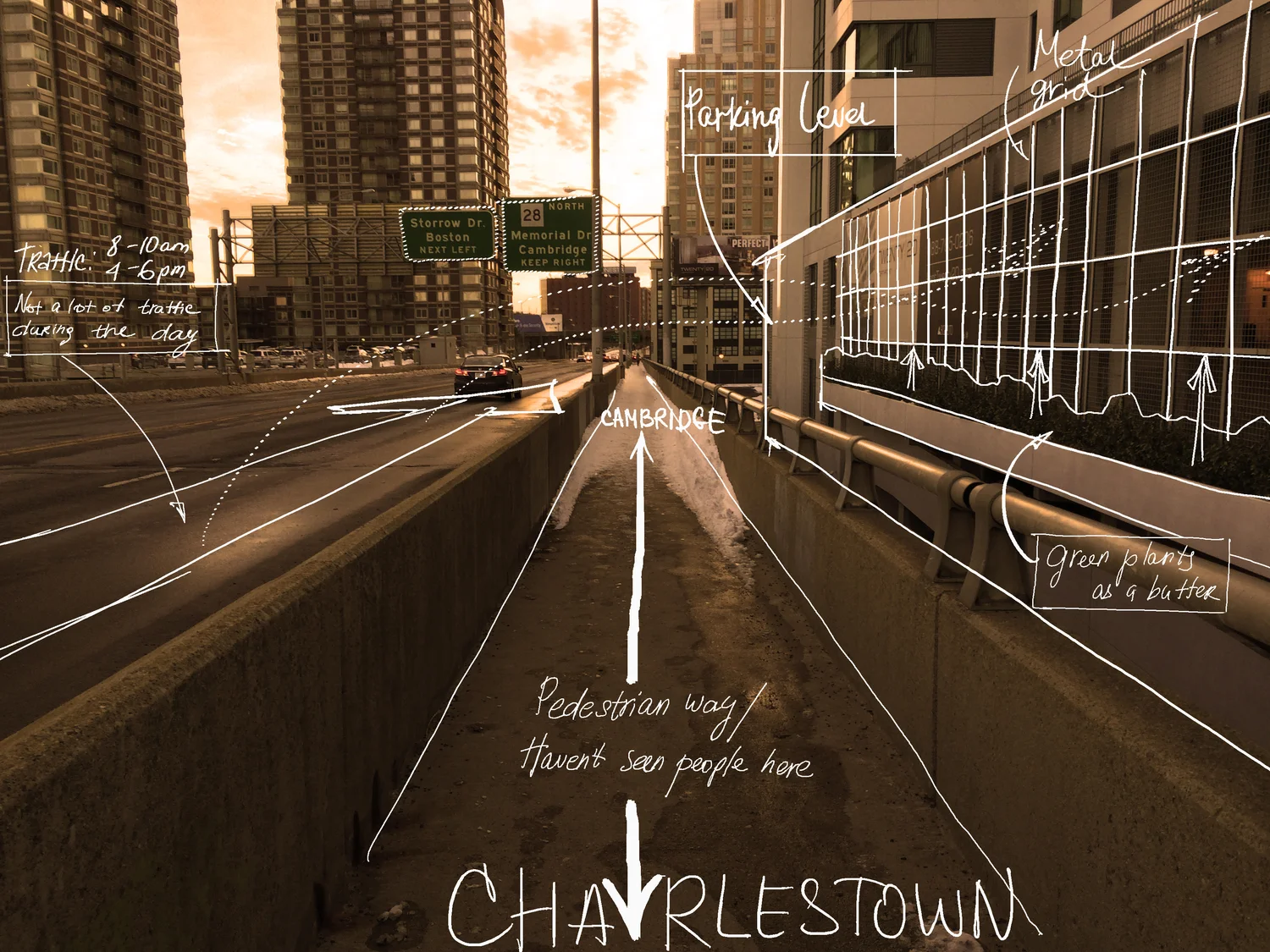

In order to test my hypothesis, I conducted case studies and experiments in which I investigated the relationships between stories, perceptions, and actions. Among those were (1) a study of how designers represent their spatial experiences using a variety of media, including drawings and physical models; (2) a virtual reality documentary that tells a story during which people spatially explore an environment; and (3) a virtual reality experiment in which subjects explore and verbally describe their environments as they do so. These studies provided initial evidence for my hypothesis and illustrated the role of inner stories in processing spatial experiences. I gathered further evidence by implementing artificial intelligence models which can connect visual and symbolic systems by composing inner stories. For example, I demonstrated that a robot can replace a cell-phone battery by following the steps provided in English in coordination with a vision system that recognizes cell-phone parts and identifies the spatial relationships among them. This experiment was particularly interesting because it illustrated how anchoring enables connecting symbolic and visual systems in order to solve problems.

Doman-Specific Representations (DSRs) allow us to identify symbols in relation to a specific visual information. In this example, a depth-map is a domain specific representation that allows assigning distance related symbols such as close and far. A visual reasoning system can refer to this representation to reason about distances.

See, Act, and Tell

I conducted the study with a total of 27 participants The resulting dataset consisted of a total of 6.5 hours of recordings, composed of the user's movement, gaze, visual modalities including RGB and depth, and time-stamped verbal descriptions . The dataset allowed corraleting among the subjects the use of spatial language and the corresponding visual data. The results showed that there was a disproportionatly frequent use of spatial language in comporison to benchmark datasets, which supported the idea that spatial language and vision system cooperated during spatial explorations. I identified several groups anchors from the dataset and tested their basic implementation.

VISUAL REASONING AND SPATIAL PROBLEM SOLVING

I developed a visual perception module for the Genesis Storyunderstanding System based on the anchoring hypothesis. I demonstrated the systems capabilities for spatial problem solving. The simulated robotic arm in the image performs a battery replacement task following the verbal instructions provided by Genesis.