Space first: A spatial inference model for multi-sensory perception

International Conference on Spatial Cognition (ICSC 2015)

In collaboration with Julia Litman-Cleper

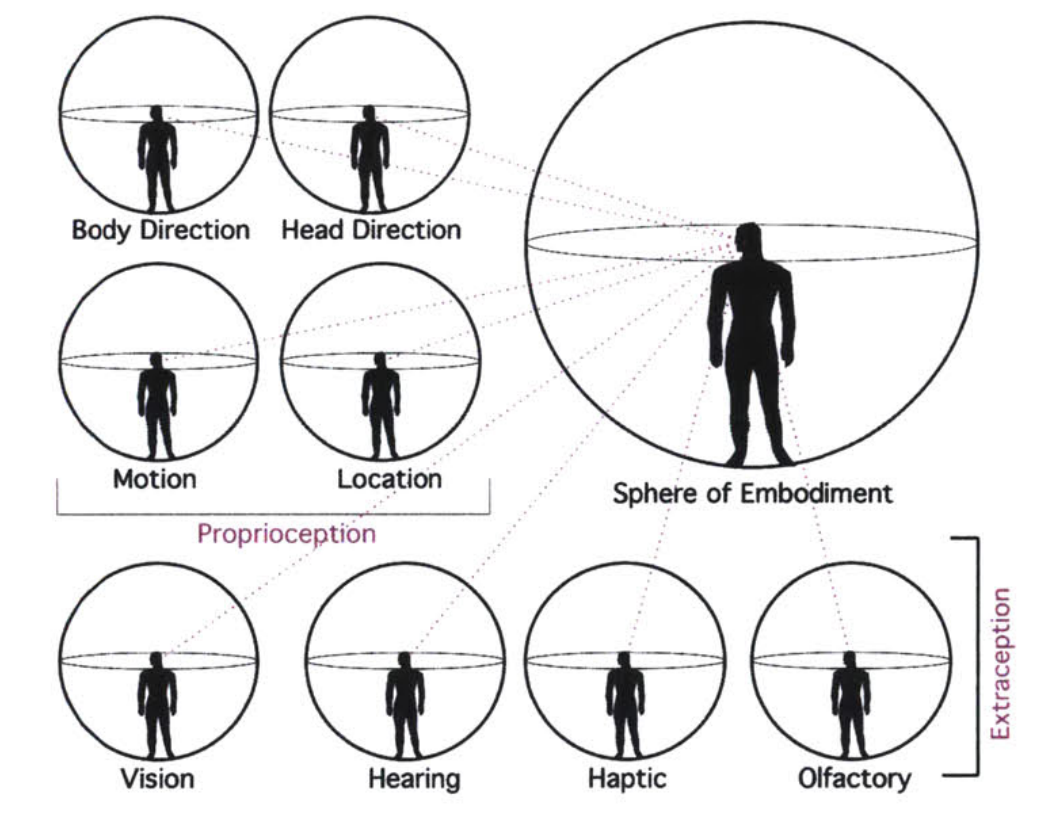

Background: Understanding the relation between action and perception requires discovering the underlying structure of multi-sensory neural representations. Building upon the idea that space is the primary representational model for integrating multi-sensory input, we hypothesize that the perception of space acts as a causal filter, through which other modalities are conditioned and thus organized. Aims: The dynamics of place-learning discovered in the ‘‘place cells’’ of the rat hippocampus, show that even in the absence of visual or auditory cues, a cognitive spatial map is at work. We seek to find evidence of these dynamics in human perception. We suggest that distance measurements is conditional given an a priori primary spatial perceptual construction.

Method: In order to be able to modulate the spatial surroundings as well as vital and auditory cues we staged our experiment in a virtual reality environment. We designed three virtual spaces with different visual environmental constructs, where a set of objects presented in varying distances accompanied by sounds produced by the speakers placed in the space. We then asked the subject to report on the distances of objects and whether the sound was causally linked to the object. We developed a model of the ideal Bayesian observer to generate predictions based on the collected data and compared the behavioral data with the model’s results.

Results: We found evidence of a shift in visual distance judgments based on the provided aural cue, even when cues were not correlated.

Conclusions: Unpacking the perception of space reveals rich information processing interactions which are conditioned first and foremost on a dynamic structure of an environment in space.